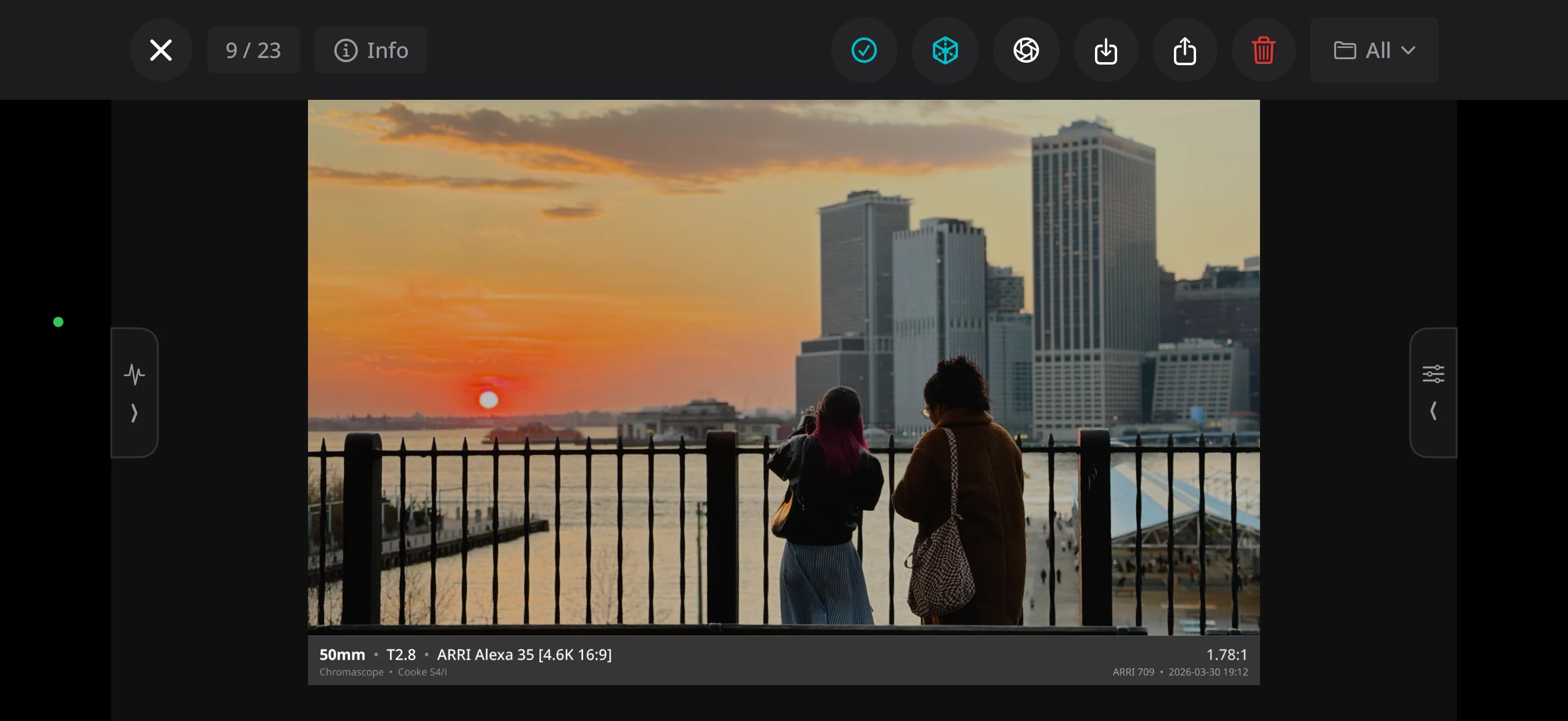

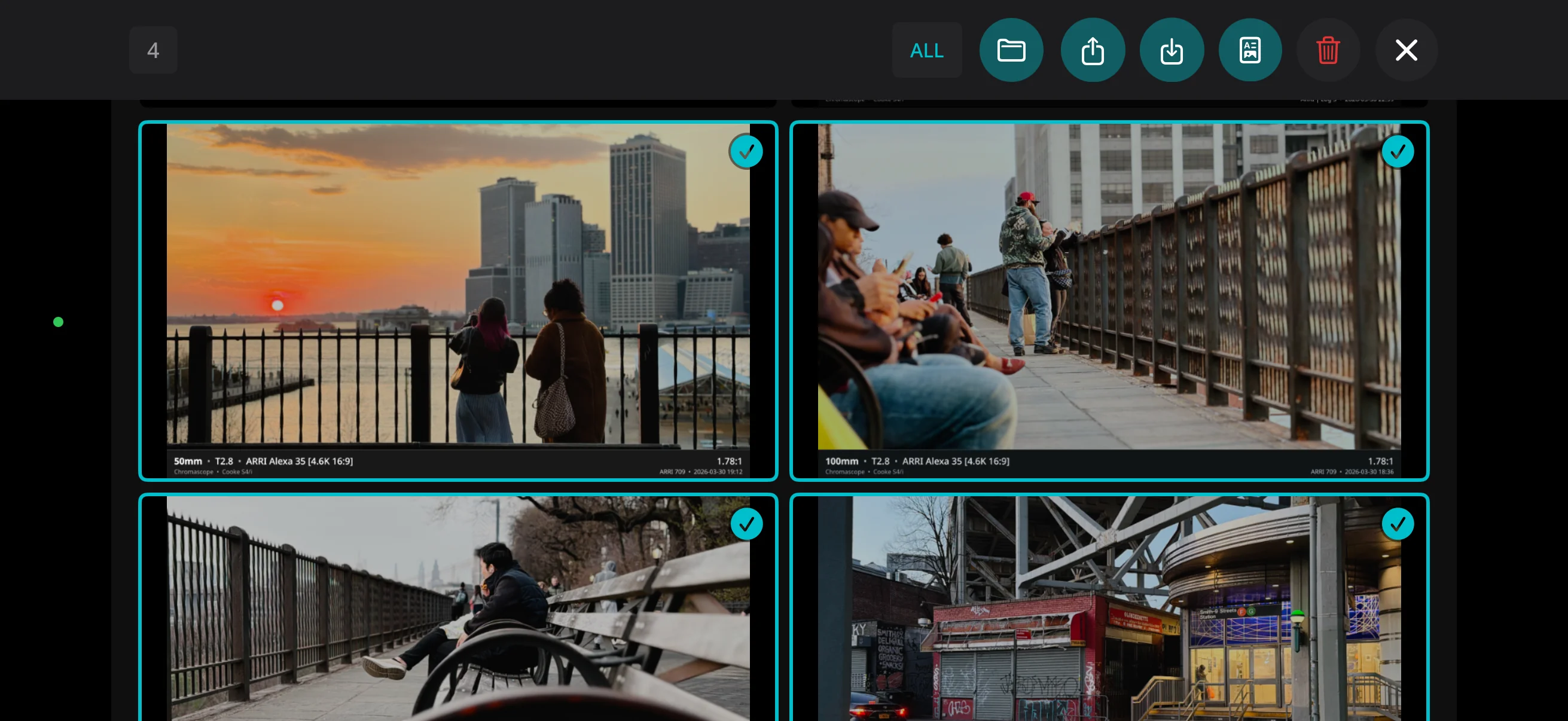

Chromascope is a director's finder for iPhone. It uses LiDAR depth and AI refinement to produce realistic shallow depth-of-field, paired with log color profiles that match real cinema cameras and lenses.

Chromascope is a director's finder for iPhone. It uses LiDAR depth and AI refinement to produce realistic shallow depth-of-field, paired with log color profiles that match real cinema cameras and lenses.

What's different

A finder should help you visualize the shot — more than just a phone camera with frame lines drawn on top. Chromascope simulates the optics and the sensor, so the image you frame actually matches the image you'll capture on shoot day.

LiDAR depth and neural refinement render shallow focus that matches your chosen lens + t-stop. Falloff tracks distance naturally, just like a real lens. Bokeh holds its shape on edges, hair, and bright points. Anamorphic modes even simulate optical characteristics and oval bokeh.

Log profiles modeled on ARRI, Sony, RED, Canon and more. What you capture in the finder matches the color space of a real camera. Use your own custom LUTS and they'll work exactly the same as they would on the actual thing, or export it flat to preview looks in your NLE or color software.

Also included

In the app

Beta

Send us a note and we'll get you in on the next build.

Request TestFlight Access